At Cresta, we believe in radical transparency when it comes to building customer trust. As part of that commitment, we share the results of our red teaming exercises - a simulated, multi-layered cyberattack conducted by independent security experts to evaluate how well our systems detect, respond to, and withstand realistic threats. We are the only AI company sharing this level of detail with our prospects and customers, because trust isn’t built by hiding behind audit reports - it’s built by showing how systems are tested, challenged, and hardened in practice.

In this blog post, we would like to share a bit about our last red team engagement, how we set it up and why, how we caught the attackers, and hardened our systems even further.

Let's start with the why. Why would you do a red team engagement in the first place?

That’s a great question, and the simple answer is: not a single attacker ever cared about scope statements in your audit reports. Purely relying on compliance audits without ever actually battle testing your defenses against determined attackers is like calling your unlocked car secure because no one ever tried the handle..

The exciting part about a red team engagement is that it really has no scope, it has objectives. In our case that was gaining access to customer data, the most important for us to protect.

We worked again with our award-winning trusted partners at Calif.io to set up an engagement that would simulate the nightmare of every security team: a malicious engineer. Insider threats are becoming increasingly dangerous with large-scale IT worker campaigns executed by nation-states especially targeting hypergrowth tech companies.

We were fully aware of how difficult it would be to defend against such a threat actor but we also made the conscious decision three years ago to give our red team more and more access each time to increase the difficulty level. Attackers do not care about your competing priorities, they relentlessly execute; we had to follow that.

After each engagement, we increased defenses, developed new detection rules, limited access, even built our own tooling. Nevertheless the team was slightly stressed how this engagement might go. So we shipped a laptop to our red team, onboarded them as a “new engineer” (even welcomed them on the all-hands call!), and gave them about eight weeks to prepare and execute an attack that would achieve the objectives while staying completely undetected.

What followed was eight weeks of anticipation; beyond the security team, nobody in the company was informed about the ongoing engagement. We wanted to have the most realistic reaction to the attack possible.

Eventually, the red team managed to find an attack path to achieve their objective. While this might sound concerning at first glance, it’s important to recognize that this is expected - mature security programs must assume that a determined adversary may eventually gain a foothold. What matters most is how quickly that activity is detected and contained.

Industry data reinforces this point: according to Unit 42, in 2025 the fastest attackers were able to move from initial compromise to data exfiltration in just over an hour (72 minutes), with over one in five incidents reaching exfiltration in under an hour.

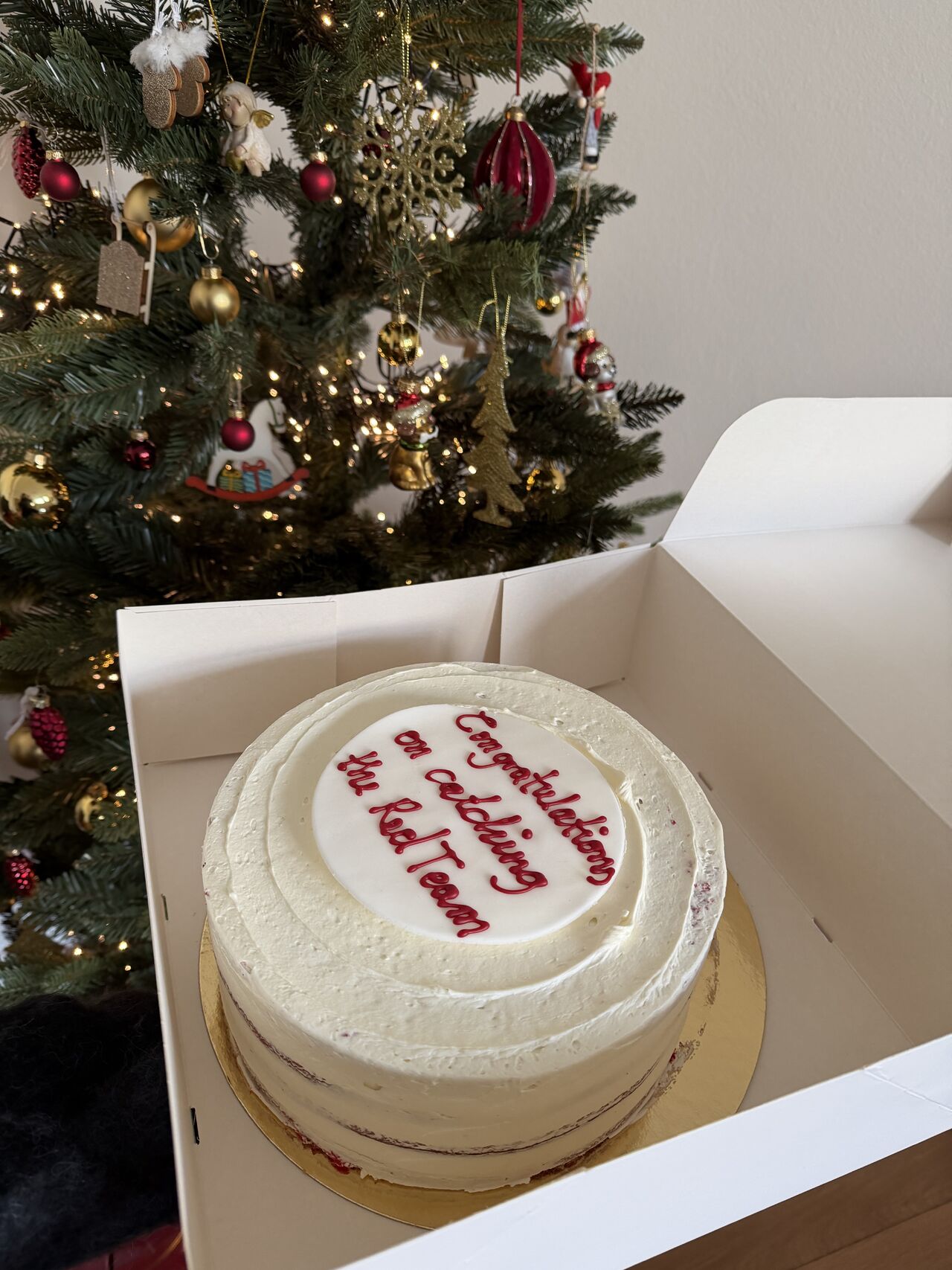

In our case, when the red team executed their attack, our detection and response capabilities caught them within minutes. Investments in deception technology particularly paid off, allowing us to identify and stop attackers who had spent eight weeks attempting to bypass our defenses.

The whole team celebrated with red velvet cake (thanks to Tracebit):

After a quick piece of cake, it was back to work.

We mitigated all findings in two weeks, and left the engagement with plenty of ideas for how we can improve our detection and response processes even more. The challenge was implementing significant hardening improvements without impacting engineering experience on a very short timeline.

Building our own access management tooling allowed us to move much faster. Nevertheless, we still partnered extremely closely with our infrastructure team on rolling out and announcing the changes and presented the findings at a Lunch & Learn session, walking the engineering team through each and every finding to explain why certain changes had to be made.

As a result of this transparency and communication, we received nothing but support from the engineering team–because trust needs to be built internally before we can expect it externally.

Reliably defending against insider threats while not sacrificing velocity is the gold standard and we are proud that we are getting closer and closer to it. We want to set the standard for radical transparency and hope we can inspire other AI companies to follow us. Third-party risk management that relies on hard evidence benefits the industry and keeps customer data secure.